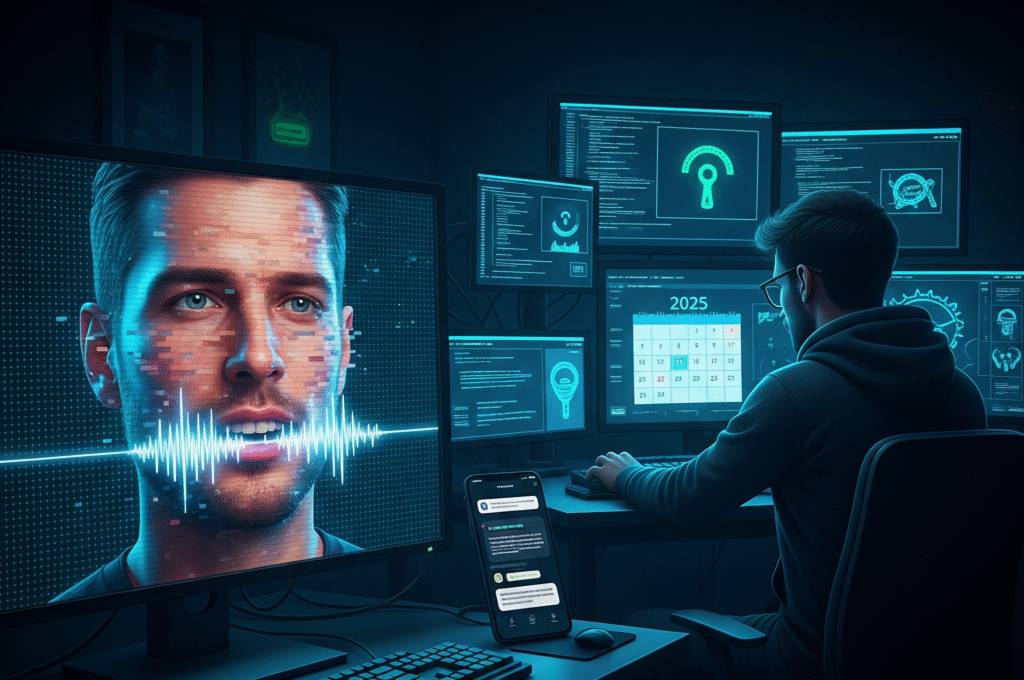

Phishing has always been the go-to weapon for cybercriminals. Traditionally, it relied on poorly written emails, suspicious links, or fake login pages. But in 2025, phishing has evolved into something far more dangerous—deepfake voice and video scams.

Using AI, attackers can now impersonate the voices and faces of trusted individuals with chilling accuracy. From CEOs to family members, no one is safe from this next-generation cyber threat.

📌 What Are Deepfake Voice & Video Scams?

Deepfakes are synthetic media created using artificial intelligence and machine learning.

- Voice Deepfakes: AI models analyze hours of speech to clone someone’s voice, allowing attackers to make convincing phone calls or voice notes.

- Video Deepfakes: AI can generate realistic facial movements, expressions, and lip-syncs, making it seem like a real person is speaking on camera—even if they never said those words.

When combined with phishing techniques, these tools enable hackers to create ultra-realistic scams that bypass human suspicion.

⚠️ Real-World Cases of Deepfake Scams

- The CEO Voice Scam: In 2024, a multinational firm lost over $25 million when fraudsters used an AI-generated voice of the company’s CEO to instruct an employee to authorize a wire transfer.

- Fake Video Calls: Cybersecurity experts have reported incidents where attackers used deepfake video calls to impersonate executives in Zoom meetings, pressuring staff to share sensitive data.

- Family Emergency Scams: Criminals are cloning the voices of loved ones to trick people into sending money—pretending their child or sibling is in urgent need of help.

These cases reveal just how persuasive and emotionally manipulative deepfake phishing can be.

🔍 Why Are Deepfakes So Dangerous?

- High Believability – Human ears and eyes struggle to distinguish between real and AI-generated media.

- Emotional Manipulation – Deepfakes exploit trust and urgency, two psychological levers in classic phishing.

- Low Barrier of Entry – Open-source AI models make it cheap and easy for attackers to create convincing fakes.

- Harder to Detect – Unlike suspicious emails, deepfake calls and videos are difficult to filter with traditional security tools.

🛡️ How to Protect Against Deepfake Phishing

- Verify Requests via Multiple Channels

- If you get a suspicious voice note or video, confirm the request through another method (e.g., a direct phone call, in-person check).

- Use Codewords or Multi-Layer Verification

- Companies can establish unique verification codes for financial or sensitive transactions.

- Adopt AI-Powered Deepfake Detection Tools

- Security vendors are developing software that can analyze audio and video for manipulation.

- Employee Awareness Training

- Staff should be trained to recognize red flags: urgency, unusual requests, or unfamiliar contexts.

- Zero-Trust Policy

- Never assume identity based solely on voice or video—treat every request with caution.

🌍 The Future of Deepfake Threats

As deepfake technology becomes more advanced, scams will only become more personalized, scalable, and convincing. Attackers may even combine voice, video, and AI-generated emails in multi-channel campaigns, making phishing nearly indistinguishable from real interactions.

In the near future, cybersecurity may rely on AI to fight AI—developing advanced detection systems to stay one step ahead.

✅ Final Thoughts

Deepfake voice and video scams are redefining phishing in 2025. The line between what’s real and what’s fake has never been blurrier. Individuals and businesses must adopt multi-layered security strategies, prioritize verification over trust, and embrace AI-based defenses.

The message is clear: seeing (or hearing) is no longer believing.