Welcome to the exciting world of machine learning (ML)—the powerhouse behind everything from Netflix recommendations to self-driving cars. If you’re dipping your toes into ML for the first time, it can feel overwhelming. But fear not! At its core, ML is about teaching computers to learn from data, just like humans do, but without the coffee breaks. In this beginner-friendly guide, we’ll break down the three main types of ML: supervised learning, unsupervised learning, and reinforcement learning. We’ll also cover the essential steps of data preparation and model evaluation to ensure your models aren’t just smart, but reliable too.

Whether you’re a curious coder, a business analyst, or just someone fascinated by AI, this post will equip you with the fundamentals. Let’s dive in!

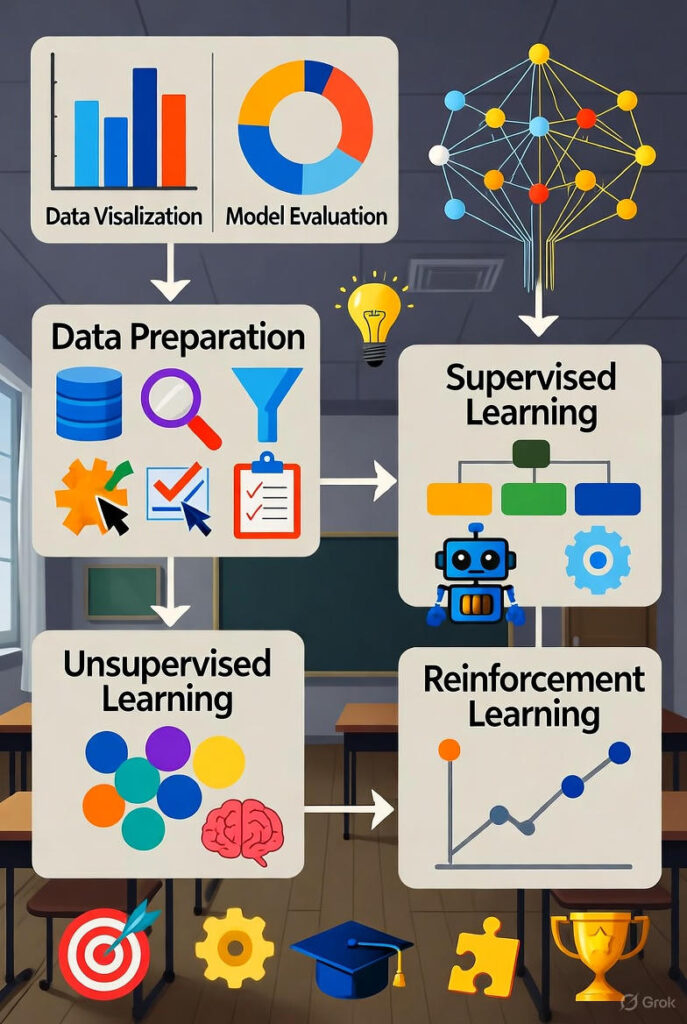

The Building Blocks: Types of Machine Learning

Machine learning isn’t one-size-fits-all. Instead, it branches into paradigms based on how the algorithm learns from data. Think of it as different teaching styles: some provide answer keys (supervised), others let students explore freely (unsupervised), and a few reward trial-and-error (reinforcement).

1. Supervised Learning: Learning with a Guide

Supervised learning is like studying with a textbook full of solved examples. Here, the algorithm is trained on labeled data—meaning each input (e.g., an email) comes paired with the correct output (e.g., “spam” or “not spam”). The goal? Predict outcomes for new, unseen data.

- Key Algorithms: Linear regression for predicting numbers (like house prices), logistic regression for categories (like yes/no decisions), and support vector machines for classification.

- Real-World Example: Email filters that learn from your “marked as spam” habits to auto-sort your inbox.

- Pros: High accuracy when labels are plentiful. Cons: Labeling data is time-consuming and expensive.

By 2025, supervised models power over 80% of production ML systems, from medical diagnostics to stock predictions.

2. Unsupervised Learning: Discovering Patterns on Your Own

No labels? No problem! Unsupervised learning thrives on unlabeled data, where the algorithm sifts through raw info to find hidden structures, clusters, or anomalies. It’s like exploring a new city without a map—you uncover neighborhoods (clusters) or trends organically.

- Key Algorithms: K-means clustering to group similar items (e.g., customer segments), principal component analysis (PCA) for simplifying complex data, and autoencoders for anomaly detection.

- Real-World Example: Streaming services like Spotify using clustering to recommend playlists based on listening habits, without explicit “like/dislike” tags.

- Pros: Great for exploratory analysis and handling massive datasets. Cons: Results can be subjective and harder to validate.

This approach is booming in big data eras, helping businesses uncover insights from unstructured sources like social media feeds.

3. Reinforcement Learning: Trial, Error, and Rewards

Imagine training a dog: you reward good tricks and gently correct bad ones. Reinforcement learning (RL) works similarly, where an agent learns by interacting with an environment, maximizing cumulative rewards over time. No fixed dataset—instead, it’s all about actions, feedback, and iteration.

- Key Algorithms: Q-learning for decision-making in games, policy gradients for complex tasks like robotics, and deep RL (e.g., AlphaGo) combining neural networks with rewards.

- Real-World Example: AI in video games (like beating humans at chess) or optimizing delivery routes for companies like UPS, where “rewards” are fuel savings.

- Pros: Excels in dynamic, sequential decisions. Cons: Computationally intensive and can take ages to converge.

RL is the darling of robotics and autonomous systems, with applications exploding in 2025’s smart factories.

Data Preparation: The Unsung Hero of ML Success

Garbage in, garbage out—that’s the ML mantra. Before feeding data to any model, preparation is crucial. It’s about cleaning, transforming, and structuring your dataset to make it model-ready. Skip this, and your predictions will be as reliable as a weather forecast from a coin flip.

Here’s a step-by-step rundown:

- Data Collection: Gather from reliable sources—databases, APIs, or sensors. Aim for diversity to avoid biases.

- Cleaning: Handle missing values (impute with means or drop rows), remove duplicates, and fix outliers. Tools like Pandas in Python make this a breeze.

- Feature Engineering: Create new variables (e.g., “age group” from “birth year”) and scale/normalize features so no one dominates (e.g., using Min-Max scaling).

- Splitting: Divide into train (70-80%), validation (10-15%), and test (10-15%) sets to mimic real-world use.

- Encoding: Convert categoricals to numbers (one-hot encoding for nominal data) and handle text/images if needed.

Pro Tip: In 2025, automated tools like AutoML pipelines (e.g., Google Cloud’s Vertex AI) are streamlining this, but understanding the basics keeps you in control.

Model Evaluation: Measuring What Matters

You’ve built your model—now how do you know if it’s a winner? Evaluation quantifies performance, catches overfitting (memorizing training data), and guides improvements. Metrics vary by task, but here’s the essentials:

| Learning Type | Common Metrics | What It Means |

|---|---|---|

| Supervised (Regression) | Mean Squared Error (MSE), R² Score | How close predictions are to actuals; R² shows explained variance (closer to 1 is better). |

| Supervised (Classification) | Accuracy, Precision, Recall, F1-Score | Accuracy is overall correct; precision/recall balance false positives/negatives (vital for imbalanced data like fraud detection). |

| Unsupervised (Clustering) | Silhouette Score, Davies-Bouldin Index | Measures cluster cohesion/separation (higher silhouette = tighter, distinct groups). |

| Reinforcement | Cumulative Reward, Episode Length | Total points earned over time; shorter episodes with high rewards indicate efficiency. |

- Cross-Validation: Test on multiple data folds for robust scores.

- Confusion Matrix: Visualize true vs. predicted for classification.

- Hyperparameter Tuning: Use grid search or Bayesian optimization to fine-tune.

Remember, the best metric aligns with your goal—e.g., recall over accuracy for life-saving apps like cancer detection.

Wrapping It Up: Your First Steps into ML

Machine learning boils down to these pillars: choose your learning type based on data and goals, prep meticulously, evaluate rigorously, and iterate. Start small—grab a dataset from Kaggle, code a simple linear regression in scikit-learn, and watch the magic unfold.